In an era where every microsecond of data can represent a significant business opportunity or a critical operational risk, the infrastructure used to store that information must be flawless. From the predictive maintenance of smart factories to the complex monitoring of global financial markets, the common thread is the need to process information in a strict chronological sequence. Many technical architects are now prioritizing db engines tsdb solutions to ensure their systems can handle the relentless influx of telemetry without compromising on query speed or data integrity. These platforms provide the high-resolution visibility required to turn raw streams of numbers into actionable intelligence.

The Technical Advantage of Chronological Storage

Standard relational databases often struggle when faced with millions of “write” operations per second because they are built to manage complex relationships between varying data types. In contrast, time series databases are optimized for linear growth. By treating time as the primary key, these systems can place data points onto physical storage in the exact order they arrive. This sequential layout is a massive advantage during retrieval, as the system can read large chunks of data in a single pass rather than jumping across different sections of a disk.

This architecture also simplifies data management. Because time series data is rarely modified once written, the database can use immutable storage files. This lack of “locking” and “contention” allows for a much higher degree of concurrency, meaning a system can ingest massive amounts of sensor data while simultaneously serving complex analytical dashboards to hundreds of users across an organization.

Efficiency Through Smart Compression and Lifecycle Rules

The cost of storing high-frequency data can escalate rapidly, making compression a mechanical necessity rather than a luxury. Specialized engines use algorithms that take advantage of the fact that many sensor readings stay within a predictable range. By storing only the “deltas”—the small changes between readings—rather than the entire number every time, these systems can achieve compression ratios that far exceed general-purpose storage.

Furthermore, these platforms allow for granular lifecycle management. An organization might decide that second-by-second data is only necessary for the first 30 days of a project. After that, the system can automatically downsample that data into hourly averages and move the original high-resolution records to cheaper cold storage. This ensures that the most expensive, high-performance hardware is always reserved for the most relevant and timely information.

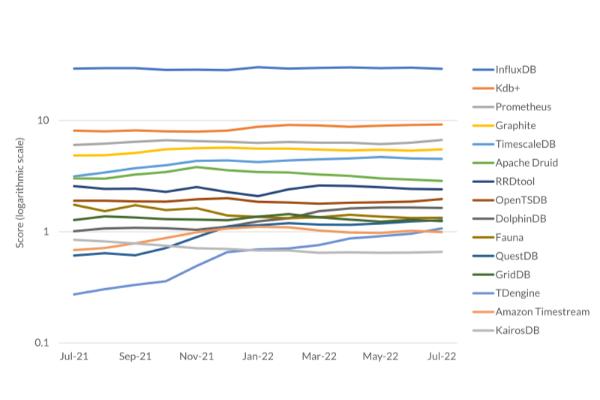

Analyzing the Competitive Industry Ranking

When evaluating a new technology stack, understanding how a platform performs relative to its peers is essential. In the current time series database ranking, the leading systems are distinguished by their ability to scale horizontally. This means that as a company’s sensor network expands, they can simply add more nodes to their database cluster rather than having to migrate to a larger, more expensive server. This “elasticity” is a core requirement for any cloud-native strategy.

The rankings also reflect the shift toward “multi-model” capabilities. While the core of these systems is time series, the best platforms now allow for the storage of metadata and tags that provide context to the numbers. Being able to query “all sensors in the Sydney warehouse” alongside the raw temperature data allows for a level of operational insight that a basic flat-file storage system simply cannot provide.

Driving Innovation Through Real-Time Observability

The primary goal of any monitoring system is to reduce the “mean time to detection” for anomalies. By using a database that supports native stream processing, companies can analyze data the moment it arrives. For a utility company managing a power grid, this might mean identifying a frequency imbalance in milliseconds and automatically rerouting power to prevent a blackout. This level of responsiveness is only possible when the database and the analytical logic are tightly integrated.

Beyond emergency response, this technology facilitates long-term optimization. By looking at historical trends over years, manufacturers can identify subtle “drifts” in machine performance that might not be visible in a weekly report. This allows for a proactive approach to maintenance, where parts are replaced based on their actual condition and usage patterns rather than a generic calendar-based schedule.

Simplifying Complex Data Workflows

One of the biggest hurdles in data science is “data cleaning” and preparation. Dedicated temporal engines simplify this by providing built-in functions for handling missing data, normalizing time intervals, and aligning disparate data streams. Instead of writing complex scripts to join a temperature sensor reading with a pressure sensor reading taken five milliseconds later, the database can “bucket” these events automatically into a unified view.

This reduction in complexity speeds up the development of new applications. Whether a team is building a new IoT dashboard or a sophisticated machine learning model, they can spend more time on the logic that adds value and less time on the plumbing of data movement. This agility is a significant competitive advantage in industries where being first to market with an insight can define success.

Strategic Insights and Performance Auditing

As an organization grows, it becomes necessary to influxdb tsdb analyze how the database infrastructure is coping with increasing complexity. This analysis usually focuses on “cardinality,” which measures the number of unique data series being tracked. A system that performs well with a thousand sensors might struggle when that number reaches a million if its indexing strategy isn’t robust. Modern engines use inverted indexes and specialized caches to ensure that searching through millions of unique device IDs remains instantaneous.

Regular performance auditing also helps in capacity planning. By understanding how CPU and memory usage scales with ingestion rates, IT teams can predict exactly when they will need to add more resources to the cluster. This prevents “bottlenecks” that could lead to data loss or delayed dashboards, ensuring that the business always has a clear view of its operations.

The Future of Edge and Distributed Intelligence

We are moving toward a future where “intelligence” is pushed further out to the edge of the network. The next generation of databases must be able to run on small, resource-constrained devices at a remote site while remaining perfectly synchronized with a massive central repository. This allows for local decision-making—which is vital for safety-critical systems—while still contributing to a global data lake for long-term strategic analysis.

Security and compliance are also at the forefront of this evolution. As data sovereignty laws become more complex, the ability to control exactly where data is stored and who has access to it is paramount. Leading platforms are incorporating fine-grained access controls and native encryption to ensure that even in a highly distributed environment, the data remains secure and the organization remains compliant with global regulations.

Establishing a Sustainable Data Ecosystem

A sustainable data ecosystem is one that can grow without becoming unmanageable or prohibitively expensive. By selecting a storage engine that is specifically tuned for time-stamped information, organizations can avoid the “technical debt” of trying to shoehorn high-velocity data into a system that wasn’t built for it. This foresight leads to cleaner code, more reliable systems, and a faster path from raw data to business value.

The transition to a dedicated time series architecture is an investment in the future of the enterprise. As the world becomes more instrumented and the volume of data continues its exponential climb, the systems that can handle the flow of time will be the most valuable assets in any company’s portfolio. Mastery over temporal data is the key to unlocking the next level of operational excellence and innovation.

Leave a comment